AI in Election Campaigns: Are New Zealand’s Rules Falling Behind?

As AI quietly rewrites political messaging, New Zealand faces a critical question: can its election laws keep pace before trust in democracy starts to erode?

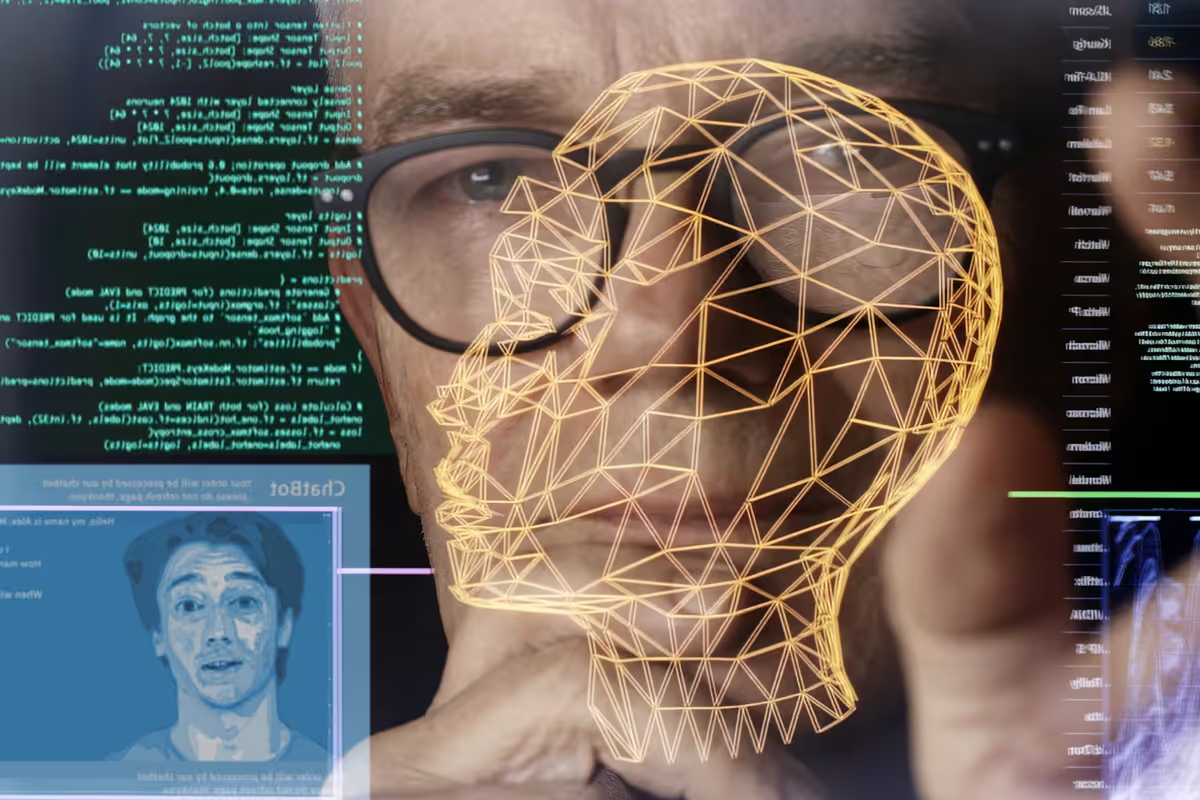

Artificial intelligence is no longer a future threat to democracy. It is already shaping political messaging today. From synthetic voices to hyper targeted ads, AI in election campaigns is quietly transforming how voters are reached. The problem is not whether this technology exists. It is whether regulation can keep up.

A recent analysis published in The Conversation warns that New Zealand’s electoral framework is not fully prepared for the scale and speed of AI-driven political tools. And the implications stretch far beyond one country.

How AI in Election Campaigns Is Already Being Used

Globally, political campaigns are using AI to draft speeches, personalize ads, analyze voter sentiment, and automate outreach. Generative tools such as ChatGPT and other large language models can create tailored political messages in seconds. Deepfake technology can replicate voices or faces with increasing realism.

According to a 2023 report by the World Economic Forum, generative AI significantly lowers the cost of producing persuasive content at scale. Meanwhile, MIT Technology Review has highlighted the growing risk of synthetic media being used in political misinformation.

In practical terms, AI in election campaigns enables micro targeting on a level previously unattainable. Campaigns can test hundreds of message variations, optimize for emotional response, and refine narratives in real time. For voters, this can mean more relevant communication. For democracy, it raises serious transparency concerns.

Why New Zealand’s Electoral Rules May Not Be Ready

New Zealand’s electoral laws were written before generative AI tools became mainstream. Current rules focus on transparency in political advertising and spending limits. They do not specifically address AI generated content, deepfakes, or automated persuasion systems.

The core issue is disclosure. If a political message is written or voiced by AI, should voters be told? If a candidate’s likeness is synthetically altered, what constitutes misinformation?

Experts argue that without clear definitions, enforcement becomes difficult. AI in election campaigns operates in gray areas where intent, authorship, and authenticity blur together.

The Ethical Risks: Misinformation and Trust

Trust is the foundation of democratic systems. AI introduces new vulnerabilities.

Deepfake videos can damage reputations within hours. Synthetic robocalls can spread misleading information at scale. Hyper personalized messaging may fragment public discourse, as voters receive tailored narratives that others never see.

Research from the Stanford Internet Observatory has shown how generative AI tools can be misused to produce convincing false political narratives quickly and cheaply. The barrier to entry is low, which increases the risk of malicious actors interfering in elections.

At the same time, banning AI outright is unrealistic. Campaigns will continue to adopt digital tools to remain competitive.

Balancing Innovation and Safeguards

The path forward likely involves updated disclosure requirements, clearer definitions of synthetic media, and stronger enforcement mechanisms. Some jurisdictions globally are already exploring mandatory labeling of AI generated political ads.

For New Zealand, the debate around AI in election campaigns is an opportunity to modernize electoral law before a major crisis occurs. Proactive regulation is far more effective than reactive damage control.

What This Means for Voters

Voters should assume that political content may increasingly be AI assisted. Media literacy is becoming as important as traditional civic awareness.

Governments must prioritize transparency. Platforms must improve detection systems. And citizens must ask critical questions about the content they consume.

Artificial intelligence is not inherently anti democratic. But without guardrails, its power can erode trust. The next election cycle may test how prepared institutions truly are.

Fast Facts: AI in Election Campaigns Explained

What is AI in election campaigns?

AI in election campaigns refers to the use of artificial intelligence tools to create, target, or distribute political messages. This includes chatbots, generative text, deepfake videos, and automated voter analysis systems.

How can AI in election campaigns influence voters?

AI in election campaigns can personalize political ads, generate persuasive content quickly, and analyze voter behavior. This can improve engagement but also increase the risk of manipulation and misinformation.

What are the main risks of AI in election campaigns?

The biggest risks of AI in election campaigns include deepfakes, misinformation, lack of transparency, and erosion of public trust if voters cannot distinguish authentic content from synthetic material.