AI in Personalized Medicine: The Ethical Quandary of Diagnostic Bias

Explore how AI diagnostic systems amplify racial and ethnic healthcare disparities. Learn current evidence on algorithmic bias in personalized medicine and mitigation strategies for equitable AI deployment in 2025.

Imagine a patient lying in an emergency room, their symptoms identical to the person next to them. Yet the AI-powered diagnostic system recommends two entirely different treatment paths. The only difference? Race. This isn't science fiction. It's happening in hospitals across the world right now.

As artificial intelligence increasingly powers personalized medicine, a troubling paradox emerges: the same algorithms designed to tailor treatments to individual patients are simultaneously encoding and amplifying healthcare inequities.

While AI diagnostics have achieved remarkable accuracy rates exceeding 90% in some cases, these performance gains mask a deeper crisis that tech developers and healthcare leaders are only beginning to confront head-on.

The Promise and the Problem: Where Personalized Medicine Meets Algorithmic Bias

Personalized medicine represents one of AI's most laudable applications. Machine learning algorithms analyze vast datasets containing medical histories, genetic profiles, test results, and clinical guidelines to develop individualized treatment strategies that traditional medicine simply cannot match.

The results are compelling: predictive models identify hidden disease patterns, enable early interventions, and optimize resource allocation in ways that save lives.

Yet this promise comes with a fatal flaw. The FDA approved 882 AI-enabled medical devices as of May 2024, with 671 of them designed for radiology. None of this matters if these tools perform differently across demographic groups. And they do.

A critical 2024 study from the University of Michigan discovered that white patients receive medical testing at rates up to 4.5% higher than Black patients presenting with identical age, sex, medical complaints, and emergency department triage scores.

When AI systems trained on this biased data reach clinical deployment, they systematically underestimate illness severity in Black populations. The result: physicians receive algorithmic recommendations that perpetuate existing disparities rather than correct them.

How Bias Gets Baked Into the Algorithm

Diagnostic bias doesn't emerge from malicious intent; it crystallizes through structural inequities embedded in training data itself. Healthcare algorithms learn from historical medical records that reflect decades of unequal treatment, underdiagnosis in minority populations, and documented racial disparities in clinical testing.

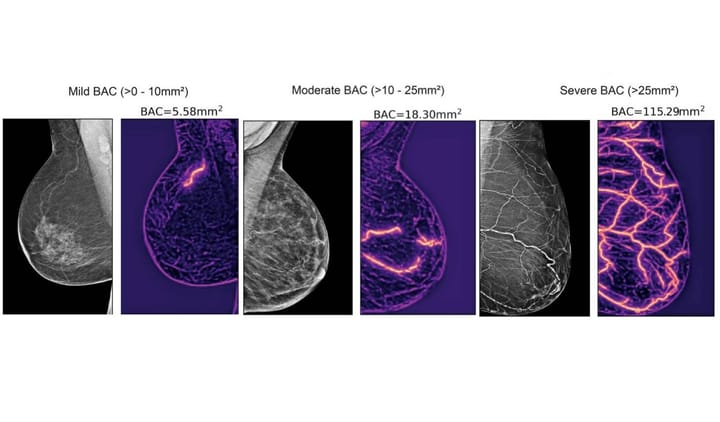

Consider what happens with dermatology AI systems. A Northwestern University study examining skin condition diagnostics found that when deep learning systems assisted primary care physicians, accuracy improved 69% overall.

But here's the concerning part: accuracy gains for patients with light skin outpaced those for patients with dark skin. The AI exacerbated existing diagnostic disparities by 5 percentage points among physicians with minimal experience treating darker-skinned patients.

The mechanism is subtle yet devastating. MIT researchers discovered that medical imaging models most accurate at demographic prediction also show the largest fairness gaps. These models are learning demographic shortcuts instead of genuine diagnostic features.

They're using race as a proxy variable, leading to systematically incorrect results for women, Black patients, and other underrepresented groups.

Psychiatric AI systems show similar patterns. A 2025 study across leading large language models found that when patient race was explicitly stated or implied, systems often proposed inferior treatments.

Diagnostic recommendations showed minimal bias, but treatment planning differed markedly based on the patient's racial or ethnic background.

The Amplification Effect: When AI Makes Disparities Worse

The most troubling aspect of AI bias isn't that it perpetuates existing inequities; it amplifies them at scale. Before AI deployment, diagnostic errors affected approximately 5% of the population annually. But now these errors compound exponentially as flawed algorithms guide treatment decisions for millions of patients simultaneously.

Real-world consequences are severe. Studies reveal that Black patients experience mortality rates nearly 30% higher than non-Hispanic white patients. While this disparity stems from multiple factors including socioeconomic conditions and healthcare access, algorithmic bias now adds another layer of disadvantage. AI systems that underestimate illness severity in Black patients actively contribute to delayed diagnoses and suboptimal treatment allocation.

The problem extends beyond demographics included in training data. If algorithms lack robust socioeconomic information or cultural context about patients' daily lives, treatment recommendations fail to account for structural barriers to compliance.

A physician might receive an algorithmic recommendation that assumes a patient can access specialized care, maintain complex medication schedules, or travel to follow-up appointments. For underserved populations facing transportation challenges, housing instability, or economic constraints, these recommendations become clinically meaningless.

The Accountability Vacuum: Who Bears Responsibility?

This raises a critical governance question that no regulatory framework currently answers adequately: when AI diagnostics fail, who is accountable?

Developers design systems without interacting with patients or understanding clinical realities. Healthcare institutions deploy AI tools into workflows without established post-market surveillance or incident response procedures.

Clinicians make final care decisions but often cannot meaningfully challenge "black box" outputs while still bearing legal liability for adverse outcomes. Patients may not even know AI informed their diagnosis.

This diffused responsibility creates a dangerous accountability void. A 2025 review in Frontiers in Medicine argues that effective AI deployment requires clear frameworks distributing responsibility fairly among developers, healthcare providers, regulators, and patients. Currently, such frameworks remain largely aspirational rather than implemented.

Informed consent represents another overlooked dimension. Patients should be told how AI informs their care, what its benefits and limitations are, and which risks stem from model bias or limited explainability. In practice, most patients receive no such disclosure.

Path Forward: Technical Solutions and Systemic Change

The challenge is solvable, though solutions demand more than technical innovation alone. Leading companies and research institutions are developing promising approaches:

Developers are implementing bias audits throughout the AI lifecycle, not just at deployment. Rather than training exclusively on historical data, some systems now incorporate synthetic data for underrepresented populations based on clinical research and population statistics. Others are developing explainable AI frameworks that reveal how algorithms reach specific recommendations, enabling clinicians to catch and correct suspicious outputs.

University of Michigan researchers developed a practical algorithm that corrects for testing disparities without discarding patient records. When applied to sepsis detection, the corrected algorithm achieved accuracy comparable to models trained on idealized, unbiased data. This approach demonstrates that bias adjustment is possible even with flawed real-world datasets.

Yet technical solutions alone prove insufficient. The research community emphasizes that reducing diagnostic bias requires collective action across healthcare stakeholders, AI developers, policymakers, and ethicists. This includes rigorous pre-deployment evaluation of AI tools, comprehensive clinician training on both system capabilities and limitations, and ongoing performance monitoring with intervention when safety issues emerge.

Data transparency represents a critical requirement. Healthcare institutions must examine how data enters algorithmic systems and whether it reflects the populations being served. Regulatory bodies should mandate public disclosure of system limitations, particularly performance disparities across demographic groups. Privacy-preserving approaches like federated learning, where models train across multiple decentralized datasets, could improve representation while protecting patient data.

The Bigger Picture: AI as a Mirror

AI in healthcare isn't merely a tool; it's a mirror reflecting our collective biases and structural inequities. As one Harvard Medical School educator noted, these systems learn from datasets that are themselves products of human decision-making processes. What appears to be objective algorithmic output is fundamentally shaped by the historical choices embedded in training data.

This recognition offers both warning and opportunity. The warning is clear: deploying AI without addressing underlying healthcare disparities will cement inequity into medical infrastructure at unprecedented scale.

The opportunity lies in using AI deployment as a catalyst for systemic change. Confronting algorithmic bias forces healthcare systems to confront the human biases that created biased data in the first place.

Personalized medicine holds genuine potential to improve outcomes, enable early intervention, and democratize access to sophisticated diagnostics. But realizing this potential requires absolute commitment to equity at every stage of development, deployment, and surveillance.

The technology itself is merely the amplifier. The real work lies in ensuring that what gets amplified reflects our stated commitment to just and equitable healthcare for all patients, regardless of race, ethnicity, gender, or socioeconomic status.

The question facing the healthcare and AI communities today isn't whether algorithmic bias exists. We now know it does, deeply and systematically. The question is whether we'll act with sufficient urgency to build the equitable systems that personalized medicine promises but has not yet delivered.

Fast Facts: AI Diagnostic Bias in Personalized Medicine Explained

What exactly is diagnostic bias in AI-driven personalized medicine?

Diagnostic bias occurs when AI algorithms trained on historically inequitable healthcare data systematically perform worse for certain demographic groups. Studies show Black patients receive diagnostic testing 4.5% less frequently than white patients with identical symptoms, and when AI learns from this biased data, it perpetuates and amplifies these disparities in clinical recommendations.

How does diagnostic bias actually affect patient outcomes?

When primary care physicians use AI diagnostic assistance, accuracy improves 69% overall but gains concentrate among light-skinned patients. Simultaneously, algorithms may underestimate illness severity in underrepresented populations, leading to delayed diagnoses, suboptimal treatment allocation, and preventable health complications at scale across millions of patients.

What's being done to correct algorithmic bias in personalized medicine?

Solutions include bias audits throughout the AI lifecycle, synthetic data for underrepresented groups, explainable AI frameworks, and regulatory mandates for performance transparency across demographics. Federated learning approaches enable model training across decentralized datasets while preserving privacy and improving population representation.